Yan Cui

I help clients go faster for less using serverless technologies.

After reading Ayende‘s post today, it got me thinking, just exactly what’s the cost of a try/catch block and more importantly what’s the cost of throwing exceptions.

Like Ken Egozi mentioned in the comments, I too believe the test was unfair as the try/catch block was applied to the top level code as opposed to each iteration. However, based on everything I know about exception in .Net I’d imagine whatever performance cost will be associated with throwing the exceptions rather than simply trying to catch them. With that in mind, I decided to carry out my own set of tests to try out three simple cases:

- 10000 invocations of a blank Action delegate, this should be considered as the ‘base line’ of the cost of iterating through the integers 1 – 10000 and whatever cost of invoking a delegate

-

10000 invocations of a blank Action delegate INSIDE a try-catch block, this should indicate the additional cost of trying to catch an exception when compared with 1.

-

10000 invocations of an Action delegate that throws an exception INSIDE a try-catch block, this should indicate the additional cost of throwing and catching the exception when compared with 2.

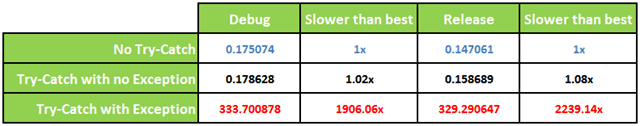

Each test of 10000 invocations are repeated 100 times (no debugger attached) and here are the average execution times in milliseconds:

For anyone who wants to try it out themselves, I’ve posted the code on github here.

As you can see, wrapping a call inside a try-catch block doesn’t add any meaningful cost to how fast your code runs, although there’s a measurable difference when exceptions are repeatedly thrown but 300 milliseconds over 10k exceptions equates to over 30k exceptions per second, which dare I say is more than anyone would rationally expect from a real-world application!

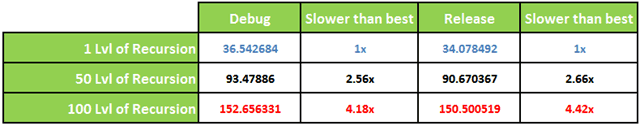

Another thing to consider is the impact the stack trace depth has on the cost of throwing exceptions, to simulate that I put together a second test which executes a method recursively until it reaches the desired level of recursion then throws an exception. Each test performs this recursion 1000 times, and repeated over 100 times to get a more accurate reading. Here are the average execution times in milliseconds:

Good news is that the cost of going deeper before throwing an exception seems to be linear as opposed to exponentially.

Closing thoughts…

Before I go I must make it clear that exceptions are necessary and the ability to throw meaningful exceptions is a valuable tool for us developers in order to validate business rules and communicate any violations to the consumers of our code. As far as these performance tests go, they’re merely intended to make you aware that there IS a cost associated with throwing exception and not to mention a little bit of fun!

Ultimately, throwing exceptions means doing work, and doing work takes CPU cycles, so you really shouldn’t be surprised to see that there’s a cost for throwing exceptions. Personally, I take my exceptions seriously, for all the projects I have worked on I have taken the time to define a set of clear and meaningful exceptions, each with a unique error code ;-)

The question you gotta ask yourself is this – would you trade 0.03ms in exceptional cases for cleaner, more concise code that lets you communicate errors to users more clearly and help you debug/fix bugs much more easily? Knowing that you end up paying for it in terms of code maintenance, debugging time anyway, I sure as hell knows which one I’d prefer! Remember, avoid premature optimization, profile your application, target the real problems and then optimize instead.

Whenever you’re ready, here are 3 ways I can help you:

- Production-Ready Serverless: Join 20+ AWS Heroes & Community Builders and 1000+ other students in levelling up your serverless game. This is your one-stop shop for quickly levelling up your serverless skills.

- I help clients launch product ideas, improve their development processes and upskill their teams. If you’d like to work together, then let’s get in touch.

- Join my community on Discord, ask questions, and join the discussion on all things AWS and Serverless.

Pingback: Exercises in Programming Style–Passive Aggressive | theburningmonk.com