Yan Cui

I help clients go faster for less using serverless technologies.

We recently found out about an interesting, undocumented behaviour of Amazon’s Elastic Load Balancing (ELB) service – that health check pings are performed by each and every instance running your ELB service at every health check interval.

Intro to ELB

But first, let me fill in some background information for readers who are not familiar with the various services that are part of the AWS ecosystem.

ELB is a managed load balancing service which automatically scales up and down based on traffic. You can really easily setup periodic health check pings against your EC2 instances to ensure that requests are only routed to availability zones and instances that are deemed healthy based on the results of the pings.

In addition, you can use ELB’s health checks in conjunction with Amazon’s Auto Scaling service to ensure that instances that repeatedly fails healthy checks with a fresh, new instance.

Generally speaking, the ELB health check should ping an endpoint on your web service (and not the default IIS page…) so that a successful ping can at least inform you that your service is still running. However, given the number of external services our game servers typically depend on (distributed cache cluster, other AWS services, Facebook, etc.) we made the decision to make our ping handlers do more extensive checks to make sure that it can still communicate with those services (which might not be the case if the instance is experiencing networking/hardware failures).

Some ELB caveats

However, we noticed that at peak times, our cluster of Couchbase nodes are hit with a thousand pings at exactly the same time from our 100+ game servers at every ELB health check interval and the number of hits just didn’t make sense to us! Working with the AWS support team, who were very helpful and shared the ELB logs with us which revealed that 8 healthy check pings were issued against each game server at each interval.

It turns out that, each instance that runs your ELB service (the number of instances varies depending on the current load) will ping each of your instances behind the load balancer once per health check interval at exactly the same time.

A further caveat being that for an instance to be considered unhealthy it needs to fail the required number of consecutive pings from an individual ELB instance’s perspective. Which means, it’s possible for your instance to be considered unhealthy from the ELB’s point of view without it having failed the required number of consecutive pings from the instance’s perspective.

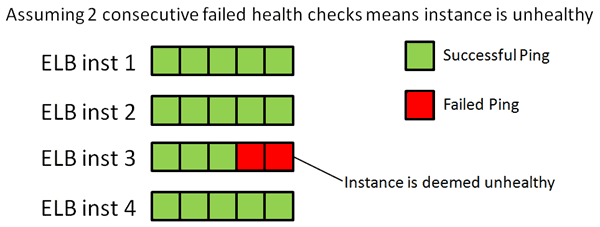

To help us visualize this problem, suppose there are currently 4 instances running the ELB service for your environment, labelled ELB inst 1-4 below. Let’s assume that the instance behind the ELB always receive pings from the ELB instances in the same order, from ELB inst 1 to 4. So from our instance’s point of view, it receives pings from ELB inst 1, then ELB inst 2, then ELB inst 3 and so on.

Each of these instances will ping your instances once per health check interval. In the last 2 intervals, the instance failed to respond in a timely fashion to the ping by ELB inst 3, but responded successfully to all other pings. So from our instance’s point of view it has failed 2 out of 8 pings, but not consecutively, however, from ELB inst 3’s perspective the instance has failed 2 consecutive pings and should therefore be considered as unhealthy and stop receiving requests until it passes the required number of consecutive pings.

Since the implementation details of the ELB is abstracted away from us (and rightly so!) it’s difficult for us to test what happens when this happens – whether or not all other ELB instances will straight away stop routing traffic to that instance; or if it’ll only impact the routing choices made by ELB inst 3.

From an implementation point of view, I can understand why it was implemented this way, with simplicity being the likely answer and it does cover all but the most unlikely of events. However, from the end-user’s point of view of a service that’s essentially a black-box, the promised (or at the very least the expected) behaviour of the service is different from what happens in reality, albeit subtly, and that some implicit assumptions were made about what we will be doing in the ping handler.

The behaviours we expected from the ELB were:

- ELB will ping our servers at every health check interval

- ELB will mark instance as unhealthy if it fails x number of consecutive health checks

What actually happens is:

- ELB will ping our servers numerous times at every health check interval depending on the number of ELB instances

- ELB will mark instance as unhealthy if it fails x number of consecutive health checks by a particular ELB instance

Workarounds

If there are expensive health checks that you would like to perform on your service but you still like to use the ELB health check mechanism to stop traffic from being routed to bad instances and have the Auto Scaling service replace them instead. One simple workaround would be to perform the expensive operations in a timer event which you can control, and let your ping handler simply respond with HTTP 200 or 500 status code depending on the result of the last internal health check.

Whenever you’re ready, here are 3 ways I can help you:

- Production-Ready Serverless: Join 20+ AWS Heroes & Community Builders and 1000+ other students in levelling up your serverless game. This is your one-stop shop for quickly levelling up your serverless skills.

- I help clients launch product ideas, improve their development processes and upskill their teams. If you’d like to work together, then let’s get in touch.

- Join my community on Discord, ask questions, and join the discussion on all things AWS and Serverless.