Yan Cui

I help clients go faster for less using serverless technologies.

One of my key takeaways from Tammer Saleh’s microservices anti-patterns talk at CraftConf is that we need to record correlation IDs to help us debug microservices.

Why do we need Correlation IDs?

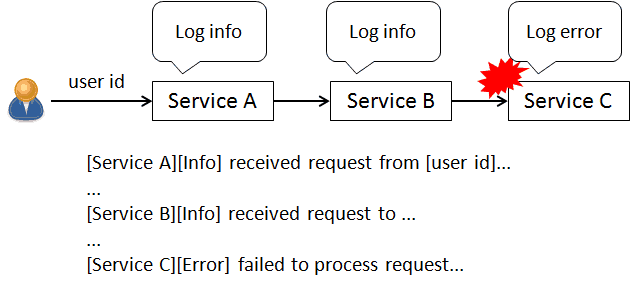

Suppose a user request comes in and after various aspects of the request has been handled by several services something goes wrong.

Even though every service would have been logging along the way it’s no easy task to find all the relevant log messages for this request amongst millions of log messages!

This is the problem that correlation IDs help us solve.

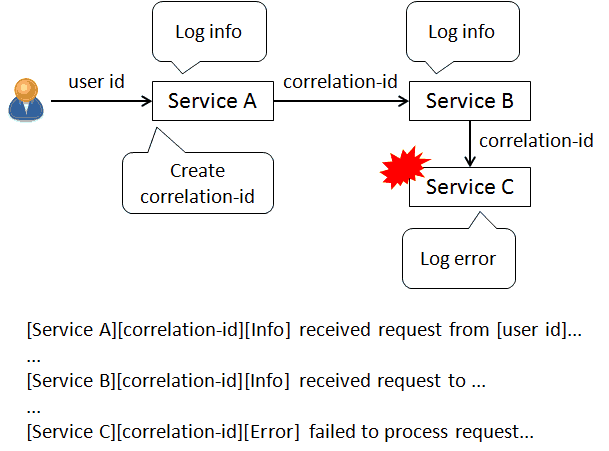

The idea is simple. When a user-facing service receives a request it’ll create a correlation ID, and:

- pass it along in the HTTP header to every other service

- include it in every log message

The correlation ID can then be used to quickly find all the relevant log messages for this request (very easy to do in Elasticsearch).

Additionally, you might wish to pass along other useful contextual information (such as payment ID, tournament ID, etc.) about the request to downstream services. Otherwise they would only appear in log messages from services where they originated from.

It’s helpful to have these contextual information in the error log message so that you don’t have to scan through all relevant messages (by correlation ID) to piece them together.

Whilst it’s a very simple pattern, it’s still one that has to be applied in every log message and every HTTP request and HTTP handler. That’s a lot of unwanted cognitive and development overhead.

In his talk,Tamer suggested that it’s just a fact of life that you have to live with, and that every team has to remember to implement this pattern in every service they create.

To me that would be setting my fellow developers up to fail – someone is bound to forget at one time or another and the whole thing falls down like a house of cards.

I wanted a more systematic approach for our team.

The Approach

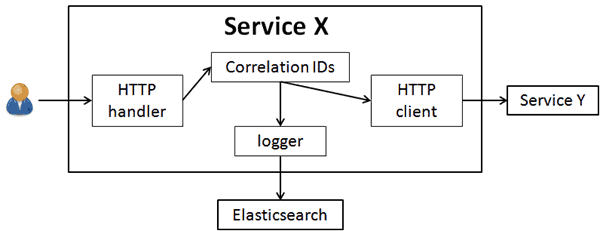

When it comes to implementation patterns like this, my natural tendency is to automate them with PostSharp. However in this case, it doesn’t seem to be a suitable solution because we need to control too many different components:

- HTTP handler to parse and capture correlation ID passed in via HTTP headers

- logger to inject the captured correlation IDs

- HTTP client to include captured correlation IDs as HTTP headers

Instead, this looks like a battle that needs to be fought on multiple fronts.

Fortunately we already have abstractions in the right places!

Logger

Our custom log4net appender can be augmented to look for any captured correlation IDs whenever it logs a message to Elasticsearch so they each appear as a separate, searchable field.

HTTP Client

We also have a custom HttpClient that wraps the BCL HttpClient so that:

- on timeouts, the client throws a TimeoutException instead of the confusing TaskCanceledException

- by default, there is a short timeout of 3 seconds

- by default, the client allows 10 consecutive timeouts before tripping the circuit breaker for 30 seconds (via Polly)

- enables HTTP caching (which by default, is disabled for some reason)

- has built-in support for HEAD, OPTIONS and PATCH

This HttpClient is also augmented to look for any captured correlation IDs and send them along as headers in every HTTP call.

Providing the Correlation IDs

For the logger and HTTP client, they need to have a consistent way to get the captured correlation IDs for the current request. That’s the job of the CorrelationIds class below.

The tricky thing is that depending on the hosting framework for your web application – we use both Nancy and Asp.Net WebApi – you might need a different way to track the correlation IDs, hence the ICorrelationIdsProvider abstraction.

Rather than forcing the consumer to call CorrelationIds.ProvidedBy, I could have tied in with an IoC container instead. I chose not to because I don’t want to be tied down by our current choice of IoC container.

HTTP Handler (Nancy)

Both Nancy and Asp.Net have mechanisms for injecting behaviour at various key points in the HTTP request processing pipeline.

In Nancy’s case, you can have a custom bootstrapper:

But we still need to implement the aforementioned ICorrelationIdsProvider interface.

Unfortunately, I can’t use HttpContext.Current in Nancy, so we need to find an alternative way to ensure the captured correlation IDs flow through async-await operations. For this I used a modified version of Stephen Cleary’s AsyncLocal to make sure they flow through the ExecutionContext.

A simplified version of the NancyCorrelationIdsProvider implementation looks like the following:

HTTP Handler (Asp.Net)

For Asp.Net WebApi projects, since we can rely on HttpContext.Current to do the dirty work, so the implementation for ICorrelationIdsProvider becomes a trivial exercise.

What wasn’t immediately obvious to me, was how to tap into the request processing pipeline in a way that can be reused. After some research, custom HTTP modules seem to be the way to go.

However, with custom HTTP modules, they still need to be registered in the web application in the web.config.

So, that’s it, what do you think of our approach?

This is the best I have come up with so far, and it has a number of obvious limitations:

- usable only from .Net, our Erlang-based services would also need something similar

- only works with web services, and doesn’t extend to queue workers (for Amazon SQS) and stream processors (for Amazon Kinesis)

- still require initial wiring up (can be mitigated with scaffolding)

If you have some ideas for improvement I would very much like to hear it!

Links

- CraftConf 15 – Takeaways from “Microservices Anti-patterns”

- Design Pattern Automation

- Implicit Async Context (“AsyncLocal”)

- ExecutionContext vs SynchronizationContext

Whenever you’re ready, here are 3 ways I can help you:

- Production-Ready Serverless: Join 20+ AWS Heroes & Community Builders and 1000+ other students in levelling up your serverless game. This is your one-stop shop for quickly levelling up your serverless skills.

- I help clients launch product ideas, improve their development processes and upskill their teams. If you’d like to work together, then let’s get in touch.

- Join my community on Discord, ask questions, and join the discussion on all things AWS and Serverless.

Great post, really helps getting into the topic!

Would it be possible to build this into a library that uses introspection for automatically applying those code pieces to a project?

On the client side, all the work went into our custom HTTP client so that was easy.

On the server side, there is a bit of boilerplate which I couldn’t wrap into a library, so we used project template to do that instead – we wrote some scripts similar to https://github.com/fsprojects/ProjectScaffold so as a developer I just need to run a batch cmd to scaffold a new project and fix the compile errors (deliberately left gaps in the code so that it won’t compile right away).

You could, using PostSharp to do IL rewrite but it depends on how your web framework of choice works. eg with Asp.Net you still have to configure the custom HTTP module somehow (eg via web.config).

Great stuff – thanks! I’m surprised to find little else on correlation tracking with Nancy.

Could you also share your changes to AsyncLocal, if you still have them? With Stephen Cleary’s original code (on GitHub), every instantiation of AsyncLocal creates a new slot name and thus a new dictionary. Not sure what I’m missing.

That was one of the things we had to change, I don’t have access to the codebase anymore (left the company almost a year ago) but *I think* the modification was to allow you to pass in an optional slot name in the constructor and default to the one if you don’t (rather than use a different slot name each time).

Thanks – I’ll give it a shot!

How does this not have synchronisation issues with using a static property in the CorrelationIds class. Surely because it’s static it’s shared across the lifetime of the application and not per request? Confused!

Scratch my last comment, whats missing is the AspNetCorrelationIdsProvider.cs