Yan Cui

I help clients go faster for less using serverless technologies.

NOTE : read the rest of the series, or check out the source code.

If you enjoy reading these exercises then please buy Crista’s book to support her work.

Following on from the last post, we will look at the Cookbook style today.

Style 4 – Cookbook

Although Crista has called this the Cookbook style, you’d probably know it as Procedural Programming.

Constraints

- No long jumps

- Complexity of control flow tamed by dividing the large problem into smaller units using procedural abstraction

- Procedures may share state in the form of global variables

- The large problem is solved by applying the procedures, one after the other, that change, or add to, the shared state

As stated in the constraints, we need to solve the term frequencies problem by building up a sequence of steps (i.e . procedures) that modifies some shared states along the way.

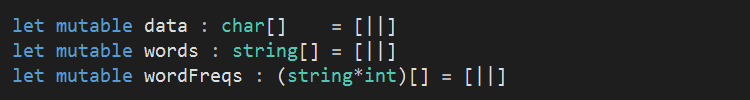

So first, we’ll define the shared states we’ll use to:

- hold the raw data that are read from the input file

- hold the words that will be considered for term frequencies

- the associated frequency for each word

Procedures

I followed the basic structure that Crista laid out in her solution:

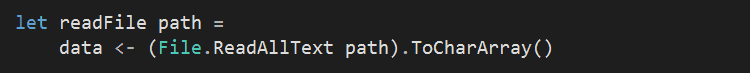

- read_file : reads entire content of the file into the global variable data;

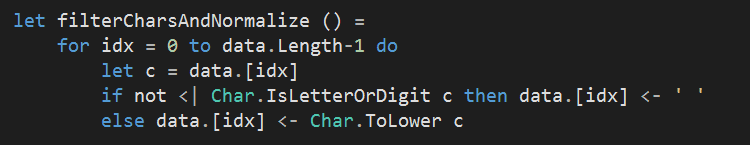

- filter_chars_and_normalize : replaces all non-alphanumeric characters in data with white space’’;

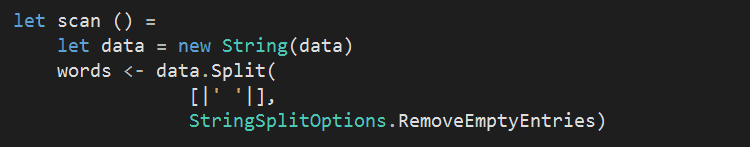

- scan : scans the data for words, and adds them to the global variable words;

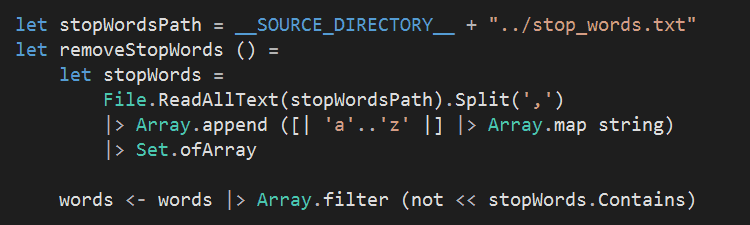

- remove_stop_words : load the list of stop words; appends the list with single-letter words; removes all stop words from the global variable words;

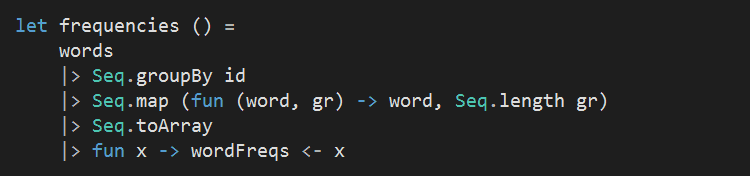

- frequencies : creates a list of pairs associating words with frequencies;

- sort : sorts the contents of the global variable wordFreqs by decreasing order of frequency

As you can see, readFile is really straight forward. I chosen to store the content of the file as a char[] rather than a string because it simplifies filterCharsAndNormalize:

- it allows me to use Char.IsLetterOrDigit

- it gives me mutability (I can replace the individual characters in place)

The downside of storing data as a char[] is that I will then need to construct a string from it when it comes to splitting it up by white space.

To remove the stop words, I loaded the stop words into a Set because it provides a more efficient lookup.

For the frequencies procedure, I would have liked to simply return the term frequencies as output, but as the constraint says that

The large problem is solved by applying the procedures, one after the other, that change, or add to, the shared state

so unfortunately we’ll have to update the wordFreqs global variable instead…

And finally, sort is straight forward thanks to Array.sortInPlaceBy:

![]()

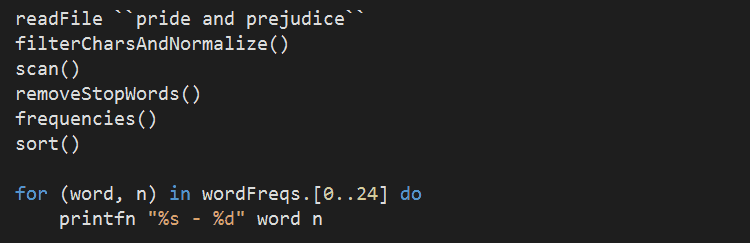

To tie everything together and get the output, we simply execute the procedures one after another and then print out the first 25 elements of wordFreqs at the end.

Conclusions

Since each procedure is modifying shared states, this introduces temporal dependency between the procedures. As a result:

- they’re not idempotent – running a procedure twice will cause different/invalid results

- they’re not isolated in mindscape – you can’t think about one procedure without also thinking about what the previous procedure has done to shared state and what the next procedure expects from the shared state

Also, since the expectation and constraints of the procedures are only captured implicitly in its execution logic, you cannot leverage the type system to help communicate and enforce them.

That said, many systems we build nowadays can be described as procedural in essence – most web services for instance – and rely on sharing and changing global states that are proxied through databases or cache.

You can find all the source code for this exercise here.

Whenever you’re ready, here are 3 ways I can help you:

- Production-Ready Serverless: Join 20+ AWS Heroes & Community Builders and 1000+ other students in levelling up your serverless game. This is your one-stop shop for quickly levelling up your serverless skills.

- I help clients launch product ideas, improve their development processes and upskill their teams. If you’d like to work together, then let’s get in touch.

- Join my community on Discord, ask questions, and join the discussion on all things AWS and Serverless.

Pingback: Exercises in Programming Style–Pipeline | theburningmonk.com

Little correction: It’s “Cristina” (based on the book’s cover), not Crista. ;)

When I met her at Joy of Coding https://theburningmonk.com/2015/06/joy-of-coding-experience-report/ this year she introduced herself as Crista, and that’s what she used on her own blog as well http://tagide.com/blog/, which is why I’ve used Crista here.

Thanks for pointing that out though, I wasn’t sure which one to use either (and might have used both along the way..) but in the end settled on Crista.