Yan Cui

I help clients go faster for less using serverless technologies.

This year’s version of NDC Oslo has a strong security theme throughout,and one of Troy Hunt’s talks – 50 Shades of AppSec – was one of the top-rated talks at the conference based on attendee feedbacks.

Sadly I missed the talk whilst at the conference but having just watched it on Vimeo it left me amused and scared in equal measures, and wondering if I should ever trust anyone with my personal information ever again…

Troy started by talking about the democratisation of hacking, that anybody can become a hacker nowadays, e.g.:

- a 5-year boy that hacked the XBox;

- Chrome extension such as Bishop that scans websites for vulnerability as you browse;

- online tutorials on how to find a site with SQL Injection risk and paste a link into tools like Havij to download its data;

- or you can go to hackerlist and hire someone to hack for you

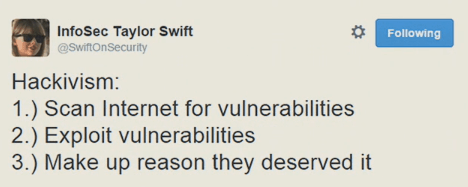

The fact that hacking is so accessible these days has given rise to the culture of hacktivism.

When it comes to hacktivism, besides the kids (or young adults) you keep hearing about on the news, there’s a darker side to the story – criminal hacking.

Criminals are now taking blackmailing and extortion to the digital age and demanding bitcoins to:

- remove your (supposedly compromised) data from the internet;

- to expose more (supposedly compromised) data;

- stop them from compromising a business’ reputation and online presence

And it’s not just your computer that’s at risk – routers can be hacked to initiate spoof attacks too. This is why encryption and HTTPS is so important.

The good news is that even smart criminals are still fallible and sometimes they get tripped up by the simplest things.

For example, Ross Ulbricht (creator of Silk Road) was caught partially because he used his real name on Stack Overflow where he posted a question on how to connect to a hidden Tor site using curl.

Or how Jihadi John was identified because he used his UK student ID to qualify for a discount on a Web Development software, that he was trying to purchase from a computer in Syria with a Syrian IP address.

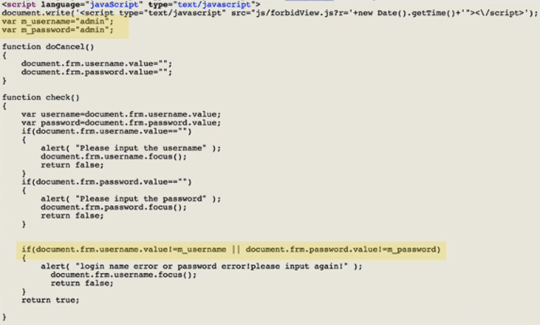

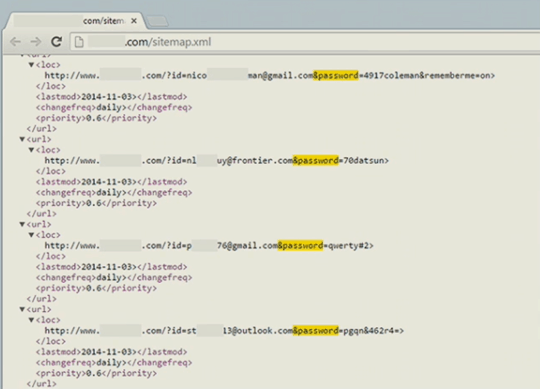

Are we developers making it too easy for criminals? Judging by the horror examples (which, ok, might be a bit extreme) Troy demonstrated, perhaps we are.

From the horrifyingly obvious…

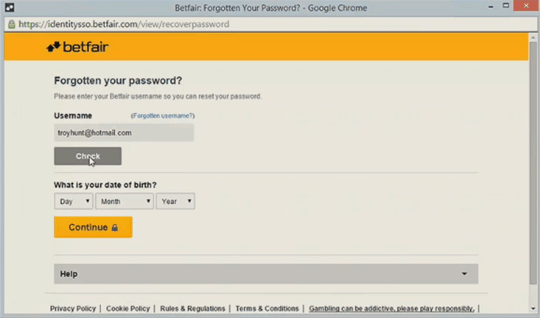

to perhaps something a bit more subtle (anyone who knows your email and DOB could reset your betfair password, until they fixed it after Troy wrote a post about it)…

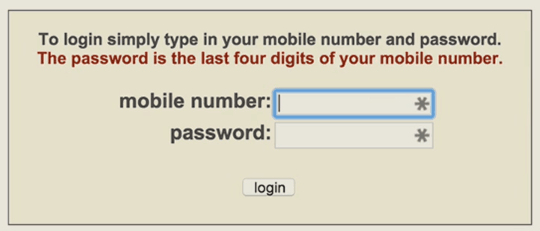

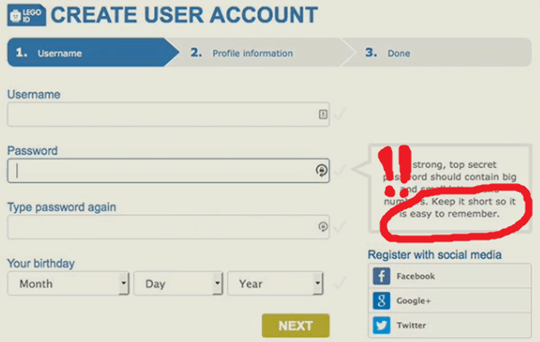

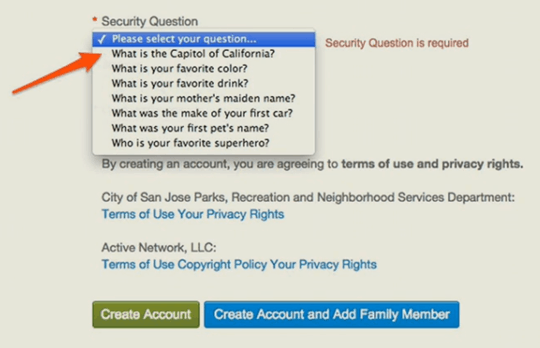

Sometimes we also make it too easy for our users to be insecure. Again, from the obvious…

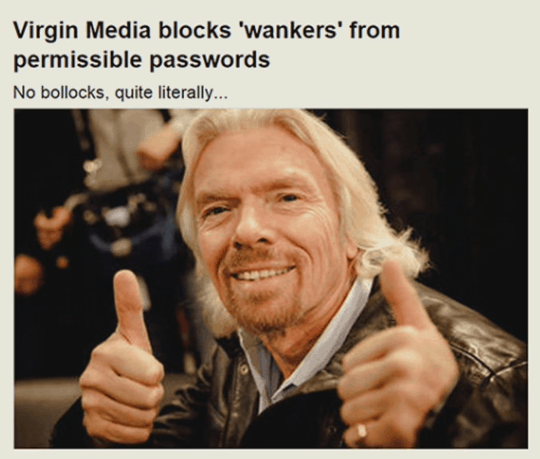

To something more subtle (the following makes you wonder if passwords are visible in plain text to a human operator who is offended by certain words)…

Notice that a recurring theme seems to be this tension between security and usability – e.g. how to make login and password recovery simpler.

Perhaps the problem is that we’re not educating developers correctly.

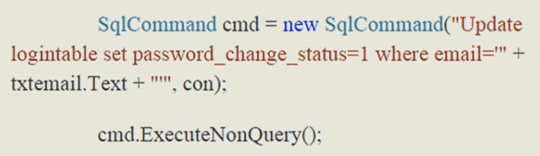

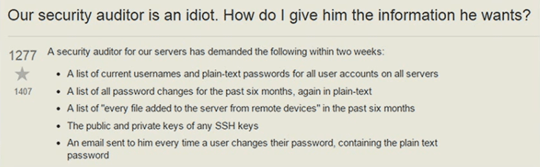

For instance, how is it that in 2015 we’re still teaching developers to do password reset like this and leaving them wide open to SQL Injection attacks?

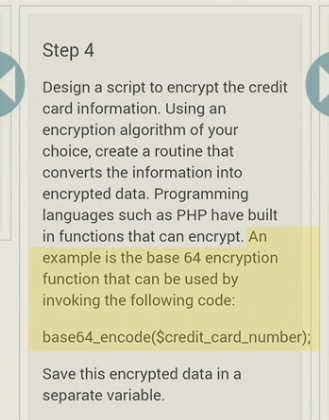

or that base 64 encoding and ROT13 (or 5 in this case..) is a sufficient form of encryption…

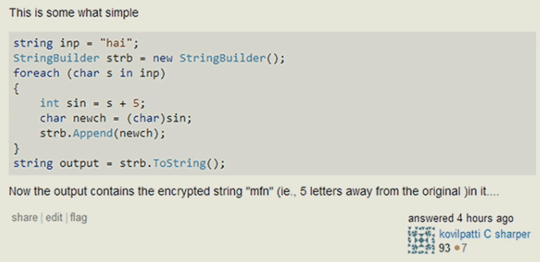

But it’s not just the developers that are at fault, sometimes even security auditors are accountable too.

And of course, let’s not forget the users.

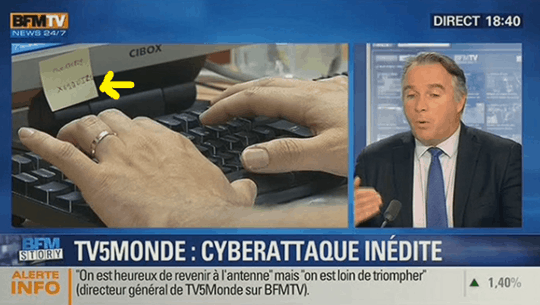

For example, you might not want to have your passwords broadcasted on national TV…

But we are also at fault for giving mixed messages to our users.

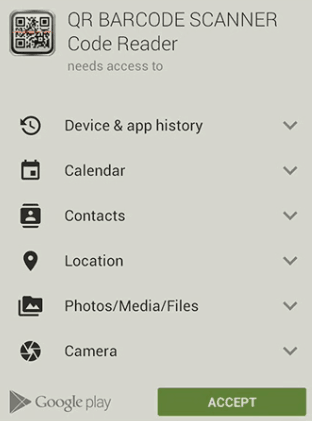

For example, why would a QR code scanner need access to your calendar and contacts? But with the design of this UI, all the user will see is the big green ACCEPT button that stands in the way of getting done what they need to do.

And social media is particularly bad when it comes to giving confusing messages.

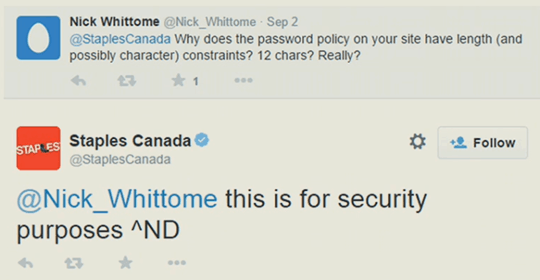

Sometimes they aren’t even trying…

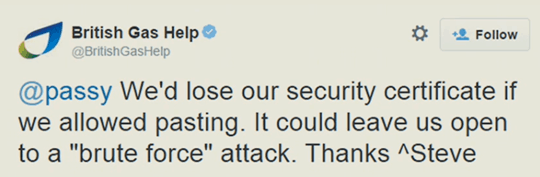

other times they make up things such as “security certificates” and throw scary words like “brute force attack” at users (how you are left open to one if you allow people to paste passwords in the text box is beyond me)…

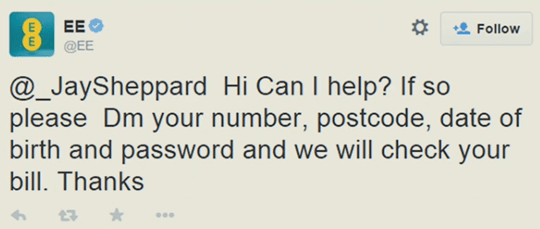

and sometimes we leave users vulnerable to criminals (e.g. what if a criminal were to look for similar responses on EE’s twitter account and then contact these users from a legitimate looking account such as @EE_CustomerService with the same EE logo)…

And you can’t talk about security without talking about governments and surveillance (and Bruce Schneier talked about this in great detail in his opening keynote too).

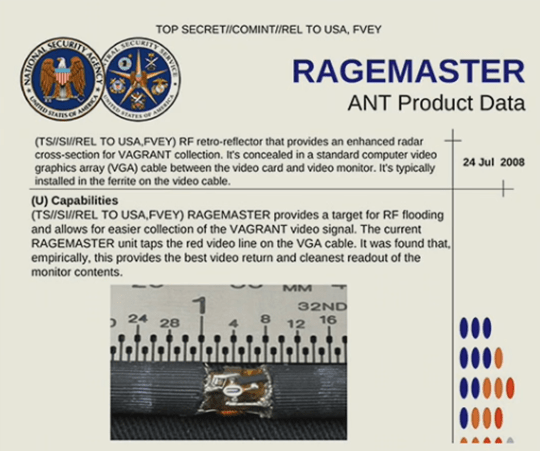

For example, the US government has technology that can wiretap your VGA cable and see exactly what’s on your monitor.

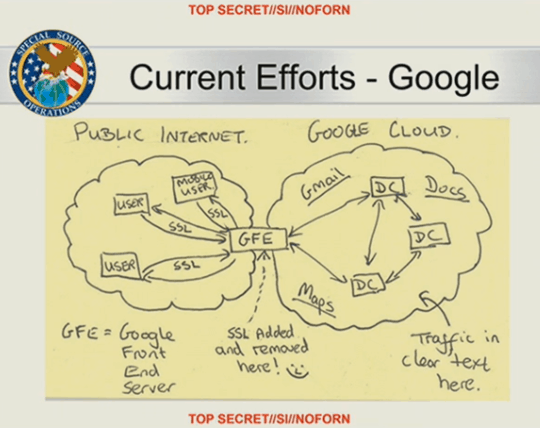

Snowden’s leaks also showed that the US government was looking at Google’s infrastructure to find opportunities where they can siphon up all your data.

And UK’s prime minister David Cameron was recently calling for an end to any form of digital communication that cannot be intercepted by the government’s intelligence agencies!

Finally, appsec gets really interesting when it intersects with the physical world.

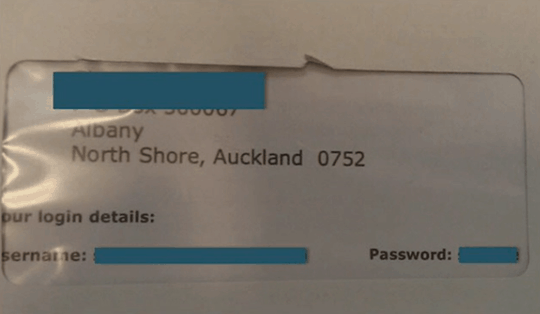

For example, when we blindly apply practices in the digital world in the physical world, sometimes we forget simple truths about this world such as letters are delivered in envelops with large windows on them…

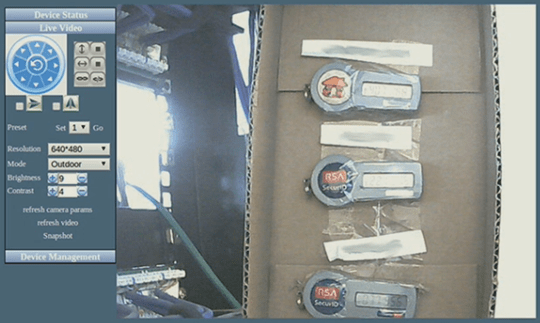

and the physical world has a way of circumventing security measures designed for the digital world…

As the digital and physical become ever more intertwined in IoT, security is going to be very interesting.

For example, a vulnerability was found in LIFX light bulbs which leaked credentials for the wireless network, allowing an attacker to compromise your home network.

Whilst LIFX has since fixed the vulnerability, the concern remains that any wifi-connected devices is ultimately vulnerable and may be hacked.

How do we educate developers to defend against these attacks when so many of them are start ups in a hurry to hit the market just to survive? How do we detect these vulnerabilities and investigate them? If your network is compromised would you look to your light bulb as the entry point of the attack?

There are many questions we don’t even know to ask yet…

As for the users, if they’re struggling with keeping their passwords under control, how do we begin to educate them on the dangers they’re exposing themselves to when they purchase these “smart” house appliances?

For anyone who has played Watch Dogs, remember at the end of the game Aiden Pearce kills Lucky Quinn by hacking his Pacemaker? This is the reality of the world that we are headed, and these kind of risks will be (are?) very real and would impact all of us. Based on the evidence Troy has shown, it’s a world that many of us are not ready for…

Links

- All FP talks at NDC Oslo

- Bruce Schneier – Data and Goliath : the hidden battles to collect your data and control your world

- James Mickens at Monitorama 2014

Whenever you’re ready, here are 3 ways I can help you:

- Production-Ready Serverless: Join 20+ AWS Heroes & Community Builders and 1000+ other students in levelling up your serverless game. This is your one-stop shop for quickly levelling up your serverless skills.

- I help clients launch product ideas, improve their development processes and upskill their teams. If you’d like to work together, then let’s get in touch.

- Join my community on Discord, ask questions, and join the discussion on all things AWS and Serverless.