Yan Cui

I help clients go faster for less using serverless technologies.

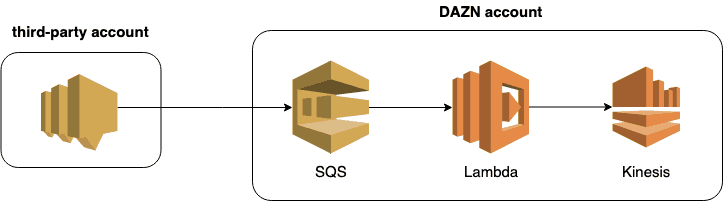

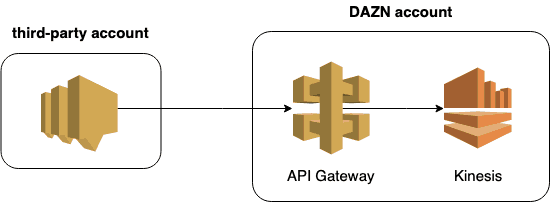

At DAZN (where I no longer work), the teams work with a number of third-party providers. They often have to synchronize data between different AWS accounts. SNS to SQS is the primary mechanism for these cross-account deliveries because:

- it was an established pattern within the organization

- DAZN engineers and third-party engineers are both familiar with SNS and SQS, as well as using Lambda to process SQS events

- Lambda auto-scales the concurrency executions based on load

Of course, as I wrote recently, EventBridge (formerly CloudWatch Events) is a great option for cross-account deliveries too. More on this later.

The Problem

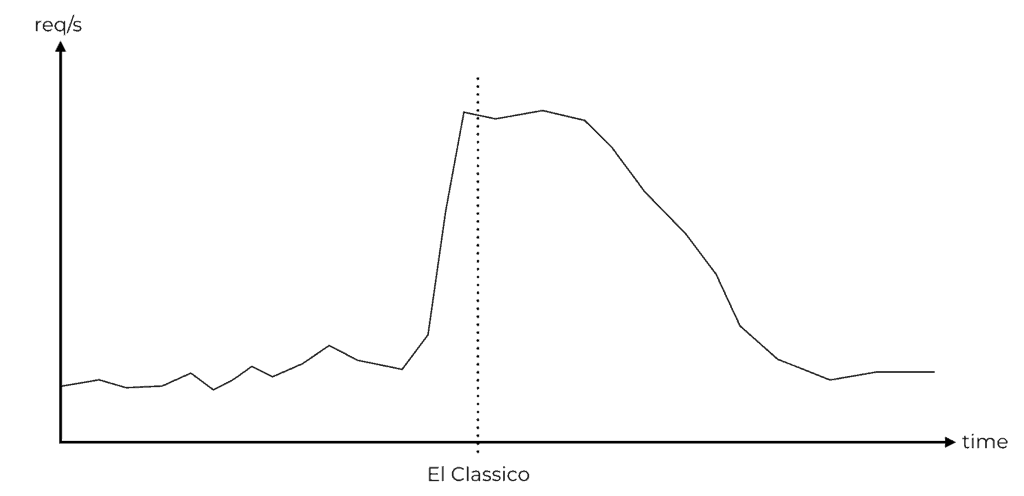

DAZN has many millions of subscribers worldwide and serves over a million concurrent viewers during live events. Its traffic pattern is very spiky and centres around these live sporting events.

Many of DAZN’s microservices live in the same AWS account (they are still in the process of moving to one account per team per environment). So these microservices contend for the same regional limits such as the number of concurrent Lambda executions.

One of the miroservices ingests events from a third-party AWS account and immediately pushes them to a Kinesis stream.

This microservice experiences large bursts of traffic immediately before a sporting event starts. Unfortunately, many other microservices also experience these spikes at the same time!

Because SQS auto-scales the number of concurrent executions, the Lambda function (that you see above) uses up too much of the available concurrency. It causes Lambda throttling events in the region, to both itself, as well as to other functions in the same region.

There is time pressure to find a suitable solution, so my buddies at DAZN reached out and we brainstormed some solutions.

Solutions

Solutions 1 – Lambda concurrency limit

Setting a reserved concurrency for the SQS function would be the simplest solution. However, you’d have to deal with the fallout from this decision. There are problems with Lambda concurrency limit and SQS. I suggest you read this and this post for more information on this. The root problem is that there is a disconnect between the number of SQS pollers (managed by Lambda) and your function’s concurrency limit.

If the SQS poller is repeatedly throttled when attempting to forward a message to your function. Then the message can be redirected to the DLQ before your function gets a chance to process it. This would be the worst-case scenario.

Even if a message is throttled just once and processed after the visibility timeout, it can still cause havoc. This delay (the visibility timeout) allows follow-up events to precede this original message in the Kinesis stream. This ordering issue already exists today because normal SQS queues do not preserve the ordering of events. However, it affects less than 1% of customers and the team does not feel it’s a significant issue. With throttling and retries, it becomes a much more pressing problem for downstream functions.

Solution 2 – use a separate AWS account

Moving this microservice into its own account would alleviate the contention issue (for concurrent executions). Doing this have other benefits too, and is a task that is already in the pipeline. However, the third-party vendor does not currently allow SQS subscription from another DAZN-owned AWS account.

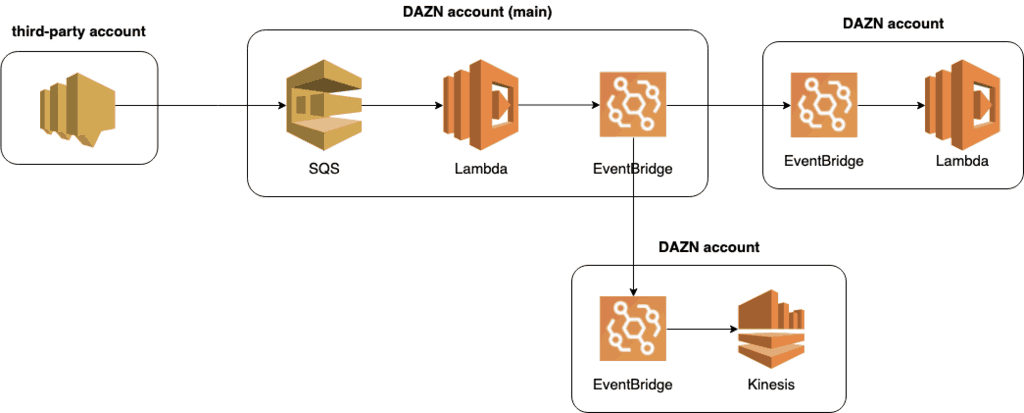

Solution 3 – switch to EventBridge

Switching to EventBridge would be another option. SNS supports few targets for cross-account delivery – HTTP, SQS or Lambda. EventBridge can deliver to far more targets, including Kinesis streams, ECS tasks, Step Functions and more. However, this requires significant changes from the third-party. Or it involves creating a DAZN-side sink in the main account and then use EventBridge to fan out to other accounts (see below).

This could be a viable solution and offers a lot of flexibility going forward. But it also faces a number of challenges, such as:

- If the third-party doesn’t change to EventBridge then you still have the same concurrency issue with the SQS function.

- It requires coordination to move multiple teams to an unfamiliar service.

Most importantly, it’s not a simple change and there is time pressure at play.

Solution 4 – go direct from SNS to Kinesis (via API Gateway)

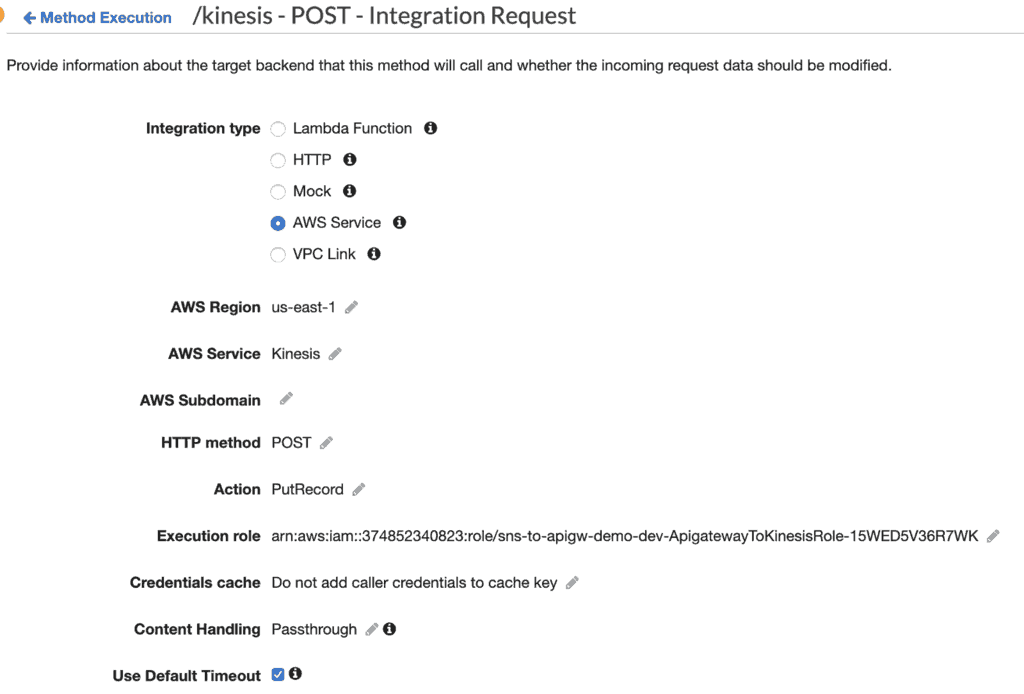

Instead of going through SQS and Lambda, you can go directly to Kinesis via an API Gateway service proxy. This means we’d subscribe an HTTPs endpoint to the third-party SNS topic instead of SQS.

This removes Lambda from the equation completely. However, API Gateway has its own throttling and contention issue. By default, API Gateway has a regional limit of 10,000 reqs/s (for all APIs). Fortunately, this is a soft limit and can be raised via a support ticket.

This was an interesting idea, so I built a simple proof-of-concept to see how it could work. You can find the source code for the demo project on GitHub here.

Connecting SNS to Kinesis via API Gateway

There are a couple of things to note:

- When you subscribe an endpoint to an SNS topic, SNS would first send a

POSTrequest to the endpoint to confirm the subscription. This page explains the confirmation flow. - The

POSTrequest contains a JSON payload like the following. You need to send aGETrequest to theSubscribeURLto confirm the subscription.

{

"Type": "SubscriptionConfirmation",

"MessageId": "eeb2e742-4746-4bf9-9add-32e45df8bfc1",

"Token": "2336412f37fb687f5d51e6e241dbca52e8c2620f5aa61c0e44f2e34cdac05af6c8d340f1a2314eff0f5dc1ee0f2e949a2fd5aa4f97d5f3e3a428c654a1eb46b096f357977b59f06c8591623d7f102a14d688dcb73291966f6208f2b07037659305f755718536b0b8de10cdb68271266f681ab4c27f6aef458c10f33916b094218854429284d32fdb59aba8fae3caf8e4",

"TopicArn": "arn:aws:sns:us-east-1:374852340823:sns-to-apigw-demo-dev-Topic-1M7QN8DMGC30Z",

"Message": "You have chosen to subscribe to the topic arn:aws:sns:us-east-1:374852340823:sns-to-apigw-demo-dev-Topic-1M7QN8DMGC30Z.\nTo confirm the subscription, visit the SubscribeURL included in this message.",

"SubscribeURL": "https://sns.us-east-1.amazonaws.com/?Action=ConfirmSubscription&TopicArn=arn:aws:sns:us-east-1:374852340823:sns-to-apigw-demo-dev-Topic-1M7QN8DMGC30Z&Token=2336412f37fb687f5d51e6e241dbca52e8c2620f5aa61c0e44f2e34cdac05af6c8d340f1a2314eff0f5dc1ee0f2e949a2fd5aa4f97d5f3e3a428c654a1eb46b096f357977b59f06c8591623d7f102a14d688dcb73291966f6208f2b07037659305f755718536b0b8de10cdb68271266f681ab4c27f6aef458c10f33916b094218854429284d32fdb59aba8fae3caf8e4",

"Timestamp": "2019-07-21T08:45:10.150Z",

"SignatureVersion": "1",

"Signature": "ZrgCtseuxHccpu3TYUwiTA4UReRe3ps49+JH4mM7ujgW778OHizjlt/x2aUDM5Jx8P5v/3lA1i0zXSV+9HEp+dCW1U7ZuurRMszSEd3NTL8YhmhmwtlsrpYXZGJUzu47h0GUi6hsIjWqeq2hR+uhion6oipwfE9YoHlcaMzaa5FmBVizQQ0dMsF15xA1iQbFhhntxKhUXOx5fr0tiVBIy6DGmFbIvPG+Qhky2zJJRbU8SjKIMeInU7NktJkG1VMPZtS7fkPvP1QTyFZ83DNku/A02ZbNMe03Map69qmRY9X/uJG6OvBtCfieZRQ6low71SeNsygoFNfNFbB2D411DA==",

"SigningCertURL": "https://sns.us-east-1.amazonaws.com/SimpleNotificationService-6aad65c2f9911b05cd53efda11f913f9.pem"

}

- You would need to subscribe a Lambda function to the Kinesis stream to perform the request confirmation.

- Weirdly, the

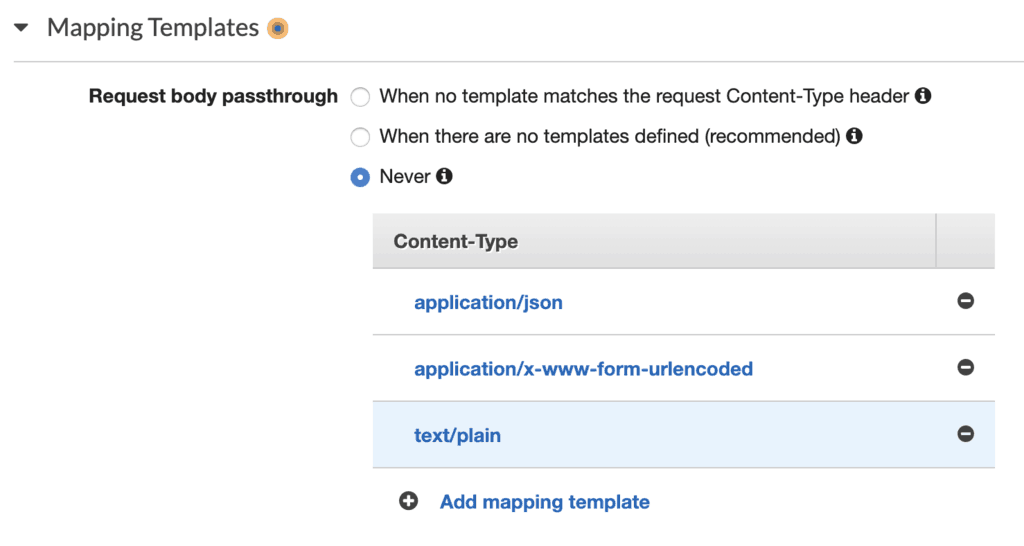

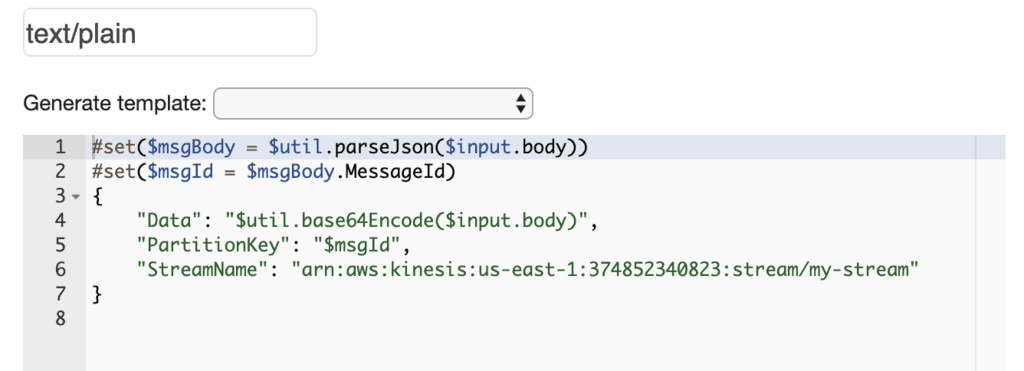

POSTrequest uses the content typetext/plain. So you would need a custom request template mapping in API Gateway fortext/plain.

- You would also need to write some custom VTL code to map the request to a Kinesis

PutRecordaction.

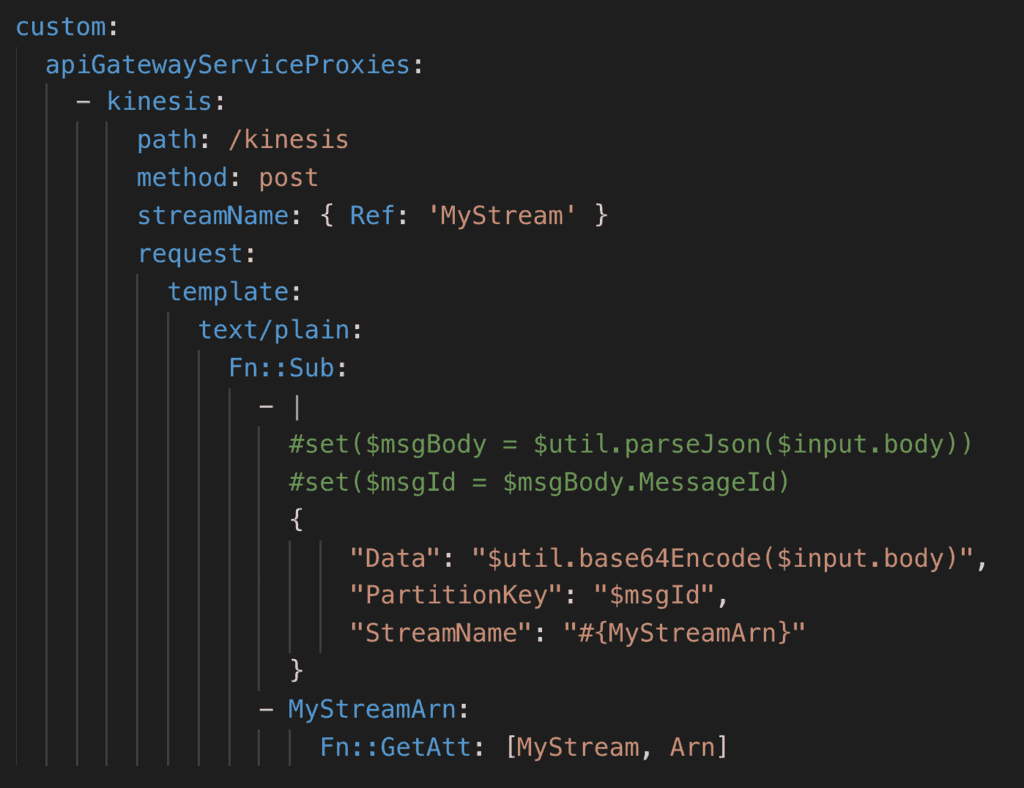

If you use the Serverless framework, then you can use Horike-san’s serverless-apigateway-service-proxy plugin to help you set up the service proxy.

The plugin makes it easy to set up service proxies for API Gateway. All I needed was to add some configuration like the following. Notice that I used Fn::Sub to weave the stream name into the VTL code to avoid hard coding.

This configuration adds a /kinesis endpoint to the API, which forwards the requests from SNS to our Kinesis stream.

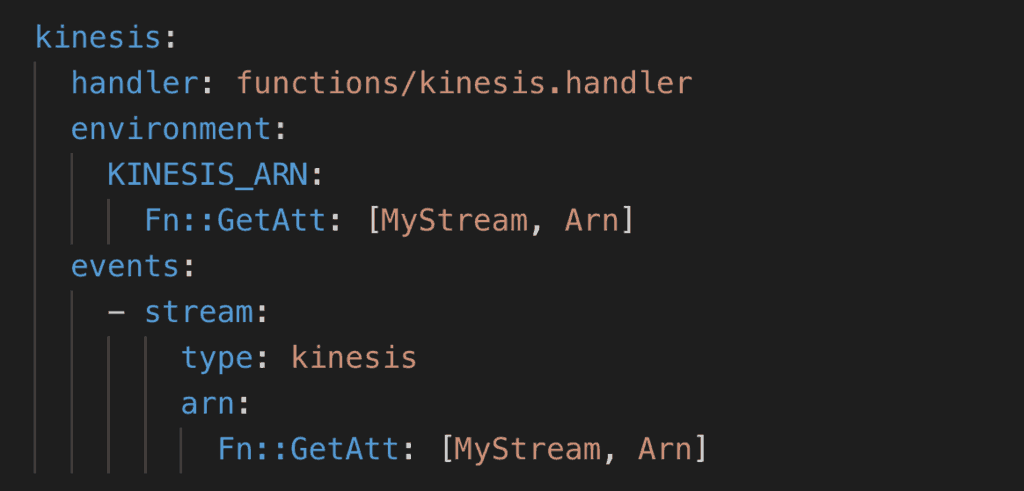

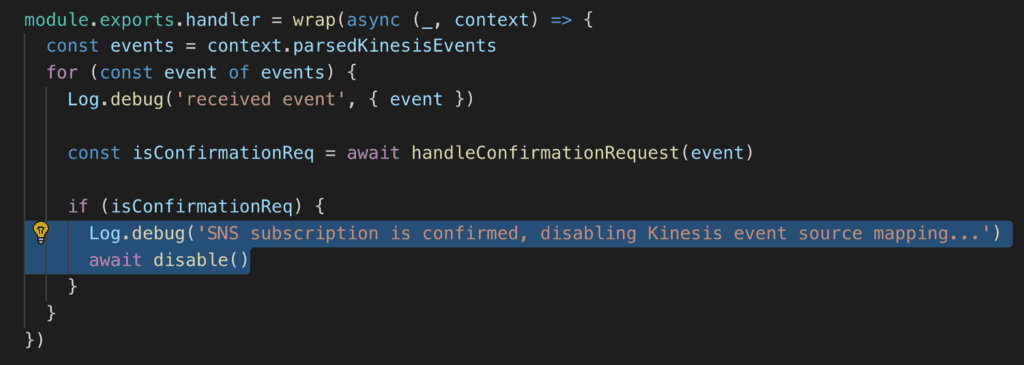

In the demo project, there is also Lambda function which is subscribed to the Kinesis stream. This function is responsible for confirming the subscription request.

However, this function only needs to run once – when the SNS topic sends its confirmation request. It would continue to receive all subsequent events and would just ignore them. That seems like such a waste!

What if the function can disable itself after confirming the subscription?

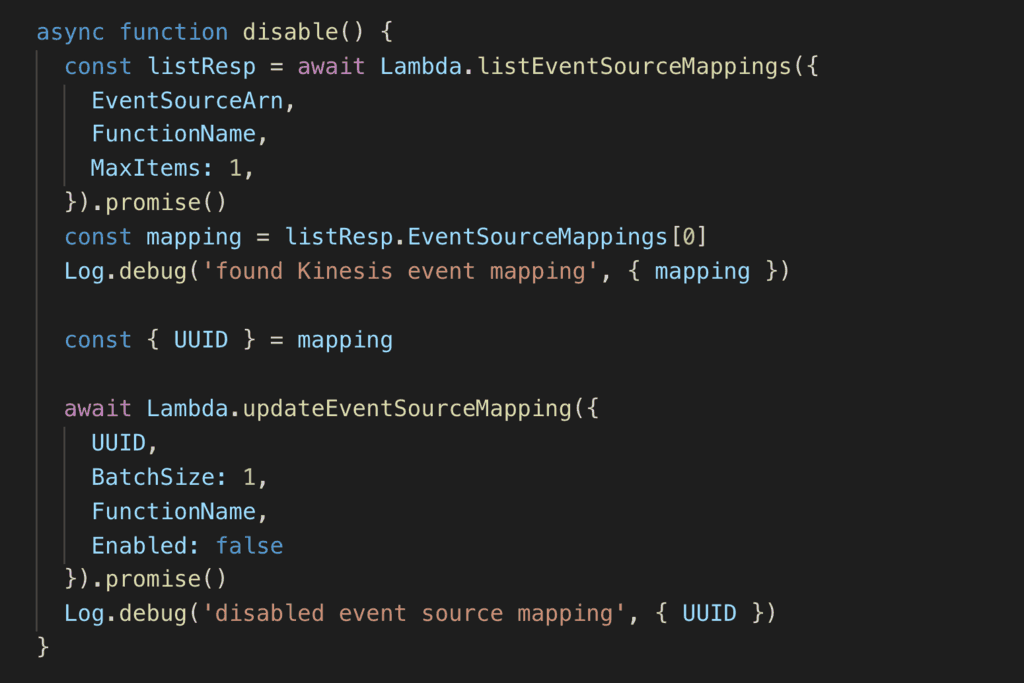

You can do just that by disabling the function’s event source mapping.

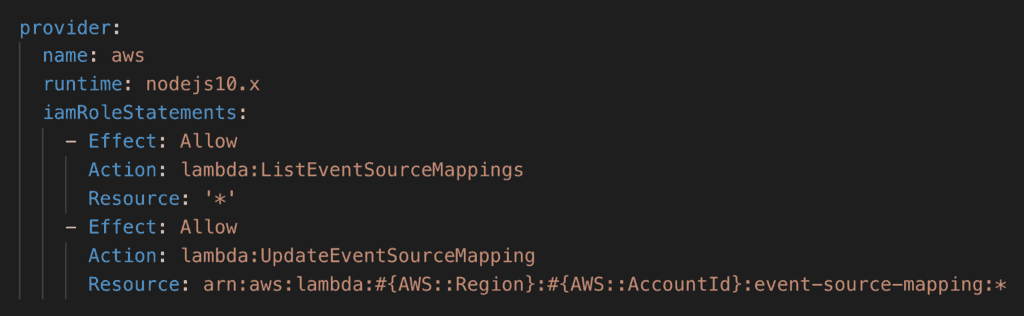

Of course, you would need the relevant IAM permissions for that.

Trying it out!

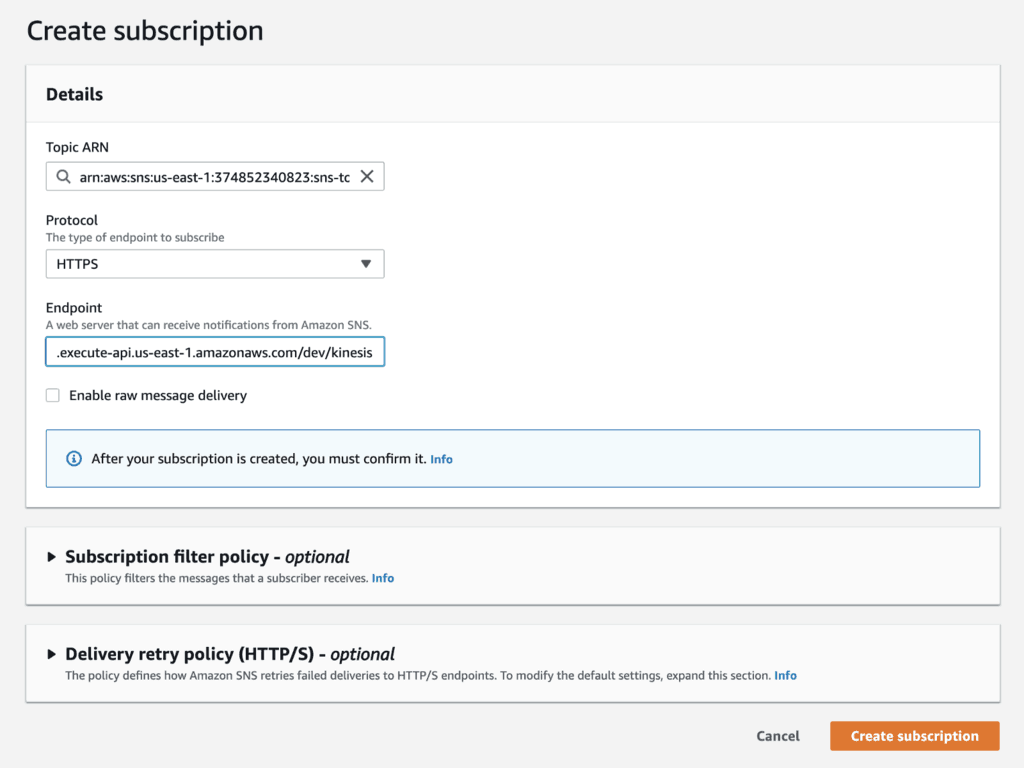

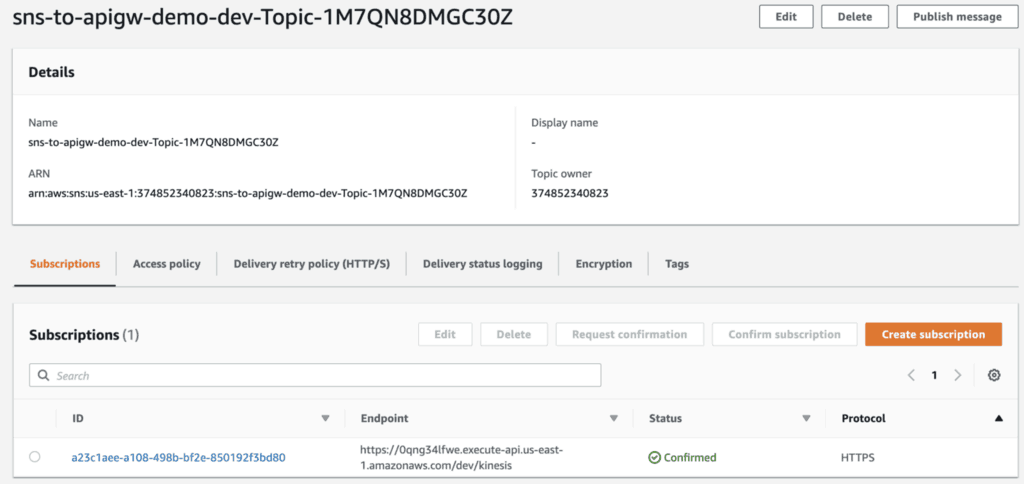

Once the project is deployed, go to the SNS topic. Create a new subscription against the /kinesis endpoint.

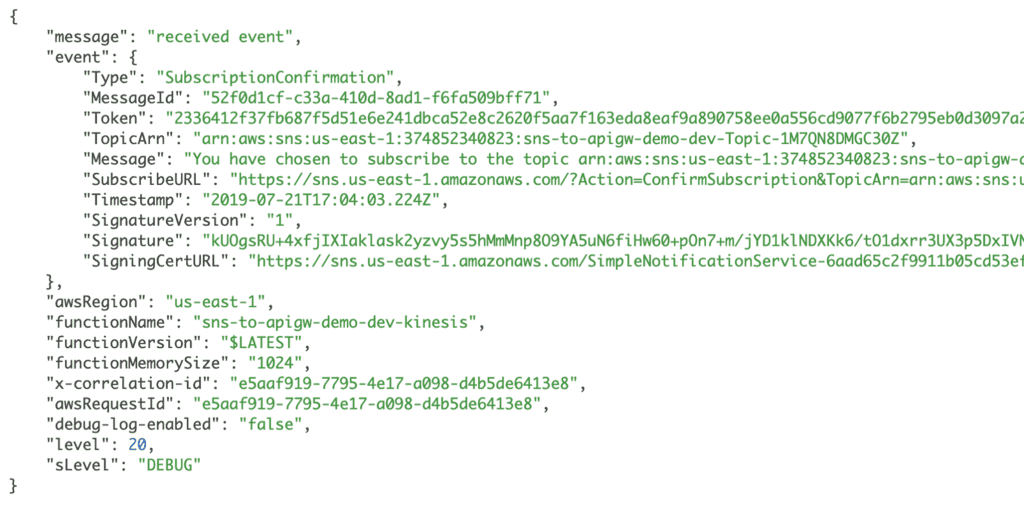

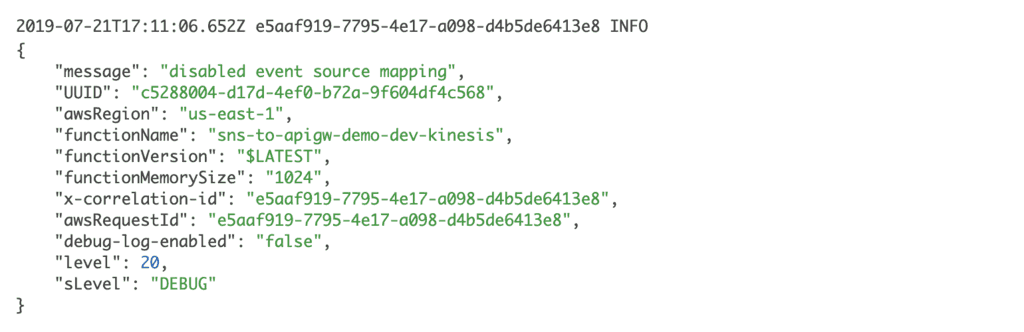

In the Kinesis function’s logs, you should see a SubscriptionConfirmation event from SNS.

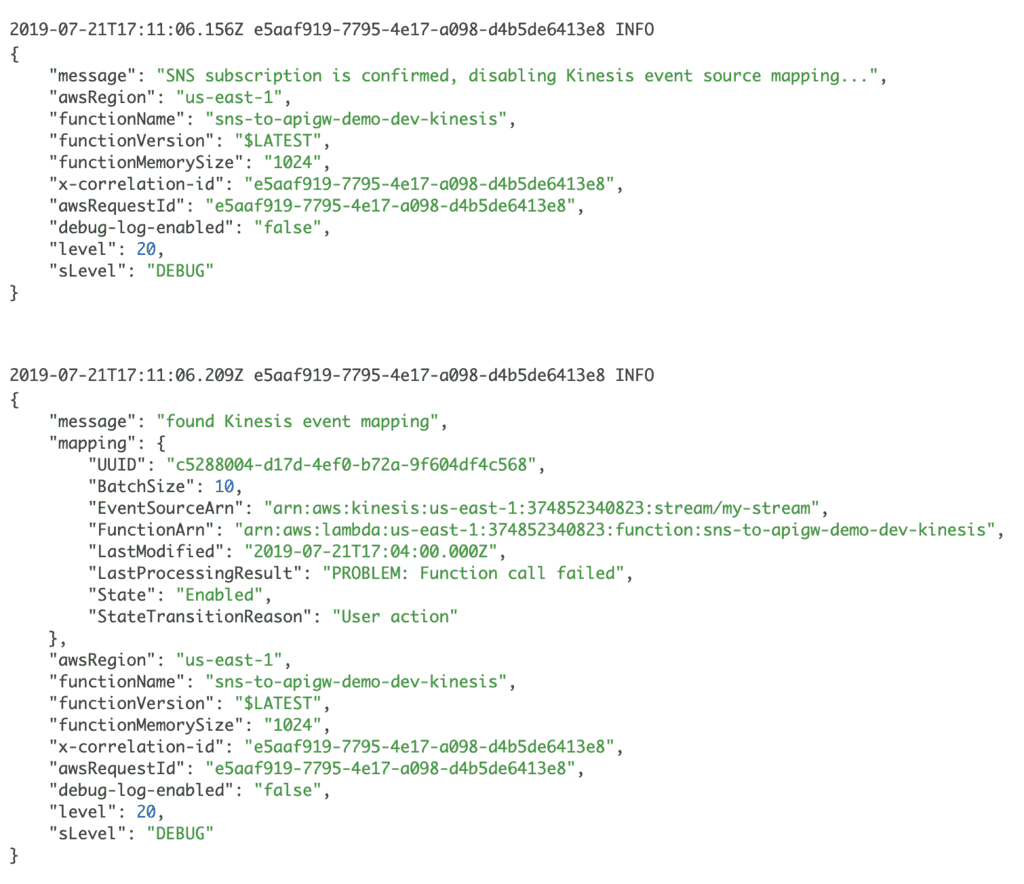

After that, you should see the logs to indicate the function is attempting to disable its Kinesis event source mapping.

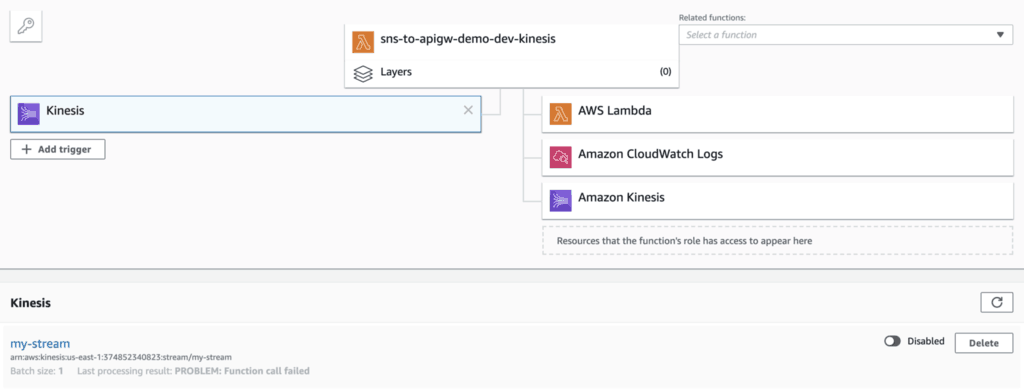

Now go to the Lambda console, and find the Kinesis function. Click on the Kinesis event source, and you should see its status changed to Disabled.

Meanwhile, if you go back to the SNS topic then you should see the subscription has been confirmed. If you publish a message to the topic then the message would be recorded in the stream but would not invoke the Kinesis function.

So that’s it, I hope you enjoyed that! It was a fun little thought experiment and demo project for a nice weekend.

Whenever you’re ready, here are 4 ways I can help you:

- If you want a one-stop shop to help you quickly level up your serverless skills, you should check out my Production-Ready Serverless workshop. Over 20 AWS Heroes & Community Builders have passed through this workshop, plus 1000+ students from the likes of AWS, LEGO, Booking, HBO and Siemens.

- If you want to learn how to test serverless applications without all the pain and hassle, you should check out my latest course, Testing Serverless Architectures.

- If you’re a manager or founder and want to help your team move faster and build better software, then check out my consulting services.

- If you just want to hang out, talk serverless, or ask for help, then you should join my FREE Community.