Yan Cui

I help clients go faster for less using serverless technologies.

This talk by Richard Rodger (of nearForm) was my favourite at this year’s CodeMotion conference in Rome, where he talked about why we need to change the way we think about monitoring when it comes to measuring micro-services.

TL; DR

Identify invariants in your system and use them to measure the health of your system.

When it comes to measuring the health of a human being, we don’t focus on the minute details and instead we monitor emerging properties such as pulse, temperature and blood pressure.

Similarly, for micro-services, we need to go beyond the usual metrics of CPU and network flow and focus on the emerging properties of our system. When you have systems with 10s or 100s of moving parts, those rudimentary metrics alone can no longer tell you if your systems are in rude health.

Message flow rate

Messages are fundamental to micro-services style architectures and message behaviour has emergent properties that can be measured.

For instance, you can monitor rates of user logins, or no. of messages sent to downstream systems. And when it comes time to do a rolling deployment (or canary deployment, or blue/green deployment, etc.) then noticeable changes to these message rates is an indicator of bugs in your new code.

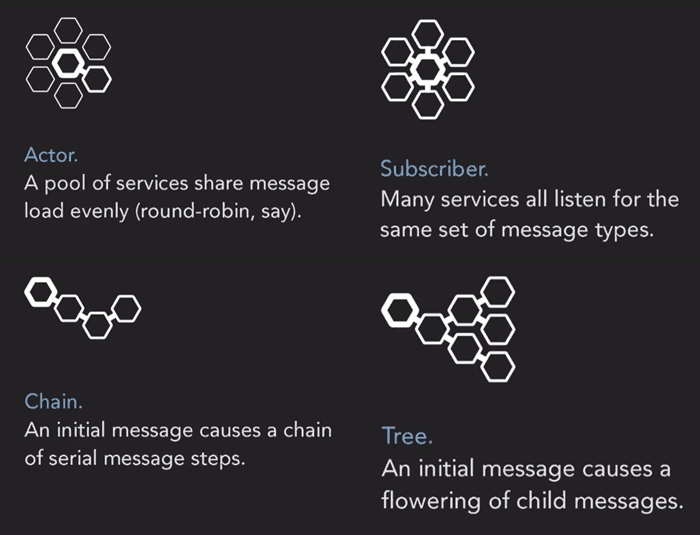

Message Patterns

Here are some simplified message patterns that have emerged from Richard’s experience of building micro-services.

Interestingly, when your micro-services architecture grows organically they tend to end up looking like the Tree pattern over time. Even if you hadn’t designed them that way.

Why Micro-services?

As many others have talked about, micro-services architectures are more complex, so why go down this road at all?

Because it helps us deal with deployment risks and move away from the big-bang deployments associated with monolithic systems (a case in point being the Knight Capital Group tragedy).

Risk is inevitable, there’s no way of completely removing risk associated with a project, but we can reduce it. The current best practices of unit tests and code reviews aren’t good enough because they only cover the problems that we can anticipate and they don’t really tell us how much our risk has been reduced by.

(sidebar : this is also why property-based testing is so great. It moves us away from thinking about specific test cases (i.e. problems that we can anticipate), to thinking about properties and invariants about our system. Scott Wlaschin wrote two great posts on adopting property-based testing in F#.)

Instead, we have to accept that the system can fail in ways we can’t anticipate, so it’s more important to measure important behaviours in production rather than trying to catch every possible case in development.

(sidebar : This echoes the message that I have heard time and again from the Netflix guys. They have also built great tooling to make it easy for them to do canary deployment; measure and confirm system behaviour before rolling out changes; and quickly rollback if necessary.)

It’s possible to do this with monoliths too, with Facebook and Github being prime examples. The way they do it is to use feature flags. The downside of this approach is that you end up with messier code because it’s littered with if-else statements.

With micro-services however, your code don’t have to be messy anymore.

(sidebar : on the related note, Sam Newman also pointed out a number of other benefits with micro-services style architectures.)

Formal Methods

Richard proposed the use of Formal Methods to decide what to measure in a system. He then gave a shout out to TLA+ by Leslie Lamport (of the Paxos and Vector Clock fame), which was used by AWS to verify DynamoDB and S3.

The important thing is to identify invariants in your system – things that should always be true about your system – and measure those.

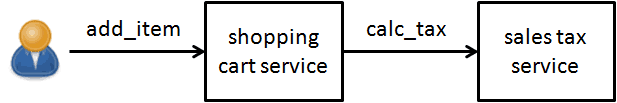

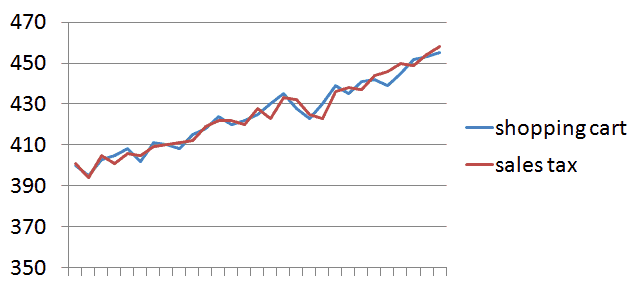

For example, given two services – shopping cart & sales tax – as below, the ratio of message rate (i.e. requests) to these services should be 1:1.

Even as the message rates themselves change throughout the day (as dictated by user activity), this 1:1 ratio should always hold. And that is an invariant of our system that we can measure!

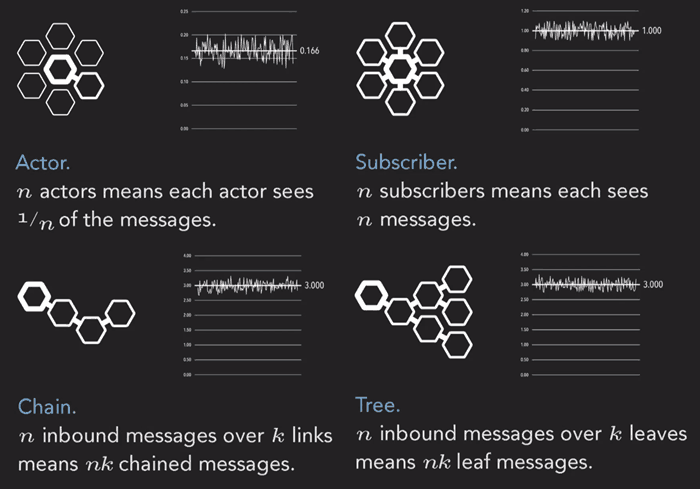

The same invariant applies to any system that follows the Chain pattern we mentioned earlier. In fact, each of the patterns we saw earlier give way to a natural invariant:

All your unit tests are checking what can go wrong. By looking for cause/effect relationships and measuring invariants, we turn that on its head and instead validate what must go right in production.

“ask not what can go wrong, ask what must go right…”

– Chris Newcombe, AWS

It becomes an enabler for doing rapid deployment.

When you do a partial deployment and see that it has broken your invariants, then you know it’s time to rollback.

Richard then talked about Gilt’s use of micro-services, who incidentally, gave a talk at CraftConf on just that, which you can read all about here.

In the Oil industry, they have a rule-of-three that says if three of the safety measures are close to critical levels then it counts as a problem. Even if none of the measures have gone over critical levels individually.

We can apply the same idea in our own systems, where we can use measures that are approaching failure as indicator for:

- risk of doing a deployment

- risk of a system failure in the near future

(sidebar : one aspect of measurement that Richard didn’t touch on in his talk is granularity of your measurement– minutes, seconds, milliseconds. It determines your time to discovery of a problem, and therefore the subsequent time to correction.

This has been a hot topic at the Monitorama conferences and ex-Netflix cloud architect Adrian Cockcroft did a great keynote on it last year.)

Links

- CraftConf 15 – Takeaways from “Microservice Anti-Patterns”

- CraftConf 15 – Takeaways from “Scaling micro-services at Gilt”

- Microservices – not a free lunch!

- A false choice : Microservices or Monoliths

- QCon London 15 – Takeaways from”Service architecture at scale, lessons from Google and eBay”

- Adrian Cockcroft @ Monitorama PDX 2014

- [Slides] Why Netflix built its own Monitoring System (and you probably shouldn’t)

- Sam Newman : Practical implications of Microservices in 14 Tips

- Seven micro-services architecture problems and solusions

- Knightmare : a DevOps cautionary tale

- How Amazon Web Services uses Formal Methods

- TLA+

Whenever you’re ready, here are 3 ways I can help you:

- Production-Ready Serverless: Join 20+ AWS Heroes & Community Builders and 1000+ other students in levelling up your serverless game. This is your one-stop shop for quickly levelling up your serverless skills.

- I help clients launch product ideas, improve their development processes and upskill their teams. If you’d like to work together, then let’s get in touch.

- Join my community on Discord, ask questions, and join the discussion on all things AWS and Serverless.

Pingback: Weave ? Infer — Episode 0046 « DevZen Podcast

Pingback: Year in Review, 2015 | theburningmonk.com